Best AI API Platforms in 2026: Compared & Tested

TL;DR — quick picks

- AI/ML API: Best for multimodal apps (text + image + video + audio) via a single key. Widest model selection at 400+.

- OpenRouter: Best for LLM-only routing with provider diversity. Strong for text model selection and cost comparison.

- Fireworks AI / Together / DeepInfra: Best for raw inference speed at high throughput. Built for production volume.

Why AI API platform choice matters more in 2026

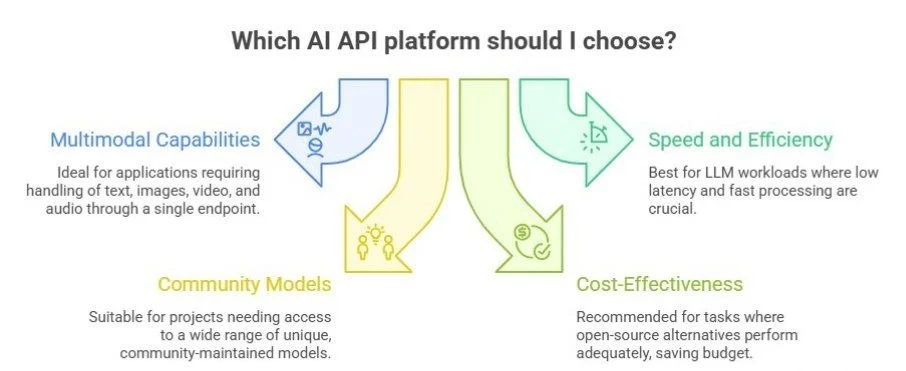

A year ago, picking an AI API was mostly about which language models you could access. GPT-4 here, Claude there. Today the decision is genuinely more complex and more consequential. The platforms have diverged sharply. Some have grown into full multimodal pipelines capable of handling text, images, video, and audio through a single endpoint. Others have doubled down on speed and routing efficiency for pure LLM workloads. A few have become home to hundreds of thousands of community-maintained models that you won't find anywhere else.

Getting this choice wrong has real costs. Build on a platform that can't handle your modality mix and you'll end up stitching together three separate vendor relationships, three billing accounts, and three different authentication patterns — all of which need to work reliably in production. Pick a slow inference provider for an agent that loops 50 times per user request and that latency compounds into something your users will notice. Overpay for frontier model access when open-source alternatives perform adequately for your task and you're burning budget you could spend elsewhere.

This comparison covers the eight platforms that came up most consistently in developer discussions, technical evaluations, and usage data in the first half of 2026. The focus is practical: what does each platform actually do well, where does it fall short, and what kind of product or use case is it genuinely suited for?

How the category has split in 2026

It helps to understand that "AI API platform" now describes at least three meaningfully different things:

- Unified multimodal hubs: platforms that aggregate models from multiple providers and serve them through one API, covering text, images, video, and audio. AI/ML API is the clearest example. You pay one bill, authenticate once, and get access to the full range of capabilities. The tradeoff is that you're dependent on the aggregator's uptime, routing logic, and pricing margins.

- LLM routers: platforms that specialize in language models only, but let you route dynamically across providers like OpenAI, Anthropic, Mistral, and Meta. OpenRouter is the prime example. The advantage is cost transparency and flexibility: you can see in real time what each provider charges and switch between them per request based on cost, latency, or availability.

- Inference infrastructure providers: platforms that run their own GPU clusters and optimize specifically for inference throughput. Fireworks AI, Together AI, and DeepInfra fall into this camp. They don't try to aggregate every provider, they host selected models and serve them as fast and as cheaply as possible.

Replicate and Hugging Face sit somewhat apart from these three categories. They're community-model platforms first, with inference as a secondary product. The catalog depth is extraordinary, but the production reliability story is different from the dedicated inference providers.

Feature matrix: 8 platforms × 10 parameters

Data accurate as of publication. Verify with each provider before committing, as features change frequently.

Platform deep-dives

AI/ML API — unified multimodal hub

AI/ML API has quietly built the broadest model catalog of any commercial API platform. The headline number is 400+ models, but the more meaningful differentiator is what those models cover: text generation from OpenAI, Anthropic, Google, Meta, MiniMax, and ByteDance; image generation including Stable Diffusion variants and FLUX; video generation; and text-to-speech — all accessible through a single API key and a single billing relationship.

This matters most for product teams building applications where users expect more than chat. A content generation tool might need GPT-5 for copywriting, FLUX for image generation, and a TTS model for audio output, AI/ML API handles all three without forcing you to manage three separate API credentials and rate limit buckets. The practical overhead reduction is real.

Crypto payment support is a distinctive feature that no other platform in this comparison offers. For teams building crypto-native applications or operating in jurisdictions where traditional payment methods are less practical, this removes a meaningful friction point.

OpenRouter — the LLM router

OpenRouter's core value proposition is routing intelligence. Rather than locking you into one LLM provider, it exposes 300+ language models from dozens of providers through a single OpenAI-compatible endpoint. You can switch between GPT-5, Claude Sonnet, Llama 3, Mistral, and Gemini per-request, or set automatic fallbacks and load balancing rules.

For developers who need to optimize cost without changing application code, this is genuinely useful. You can benchmark models for your specific task, identify the cheapest one that meets your quality bar, and route production traffic there — all without touching your integration. The real-time cost comparison dashboard makes this analysis accessible without custom tooling.

The hard limitation is that OpenRouter is LLM-only. No image generation, no video, no audio. Teams building multimodal products will need a separate solution alongside it, which partially defeats the simplicity advantage.

Fireworks AI — speed-first inference

Fireworks AI runs its own GPU infrastructure and has optimized it specifically for low-latency, high-throughput LLM inference. At approximately 150ms median latency, it's the fastest platform in this comparison under comparable load conditions. That advantage matters most for agentic systems — where a model calls tools, processes results, and loops multiple times per user action — and for real-time applications like voice interfaces or live coding assistants where perceived responsiveness is critical.

The model catalog is narrower (100+ models versus 300–400 on the routers), but it covers the models that production workloads actually need: the major open-source families and fine-tuned variants optimized for specific tasks. Partial audio support rounds out the feature set modestly. Free tier available, pay-as-you-go pricing, no minimums.

Together AI & DeepInfra — solid inference alternatives

Together AI and DeepInfra occupy similar ground to Fireworks AI: inference-optimized infrastructure with competitive latency and pay-as-you-go pricing. Together AI adds video generation support, making it slightly more capable on the multimodal side. DeepInfra includes audio/TTS. Both are worth benchmarking against Fireworks for your specific model and use case — the latency differences between these three narrow at smaller batch sizes.

Replicate & Hugging Face — depth over speed

If you need a model that isn't hosted on any of the commercial inference platforms — a fine-tuned variant, a niche research model, something built on a specialized architecture — Replicate and Hugging Face are where you look. Replicate's per-second billing model works well for infrequent or bursty usage but can become expensive at sustained scale. Hugging Face's cold-start variability is the main operational challenge in production; shared inference endpoints can take several seconds to respond after idle periods.

Use cases: which platform for which job

AI API pricing in 2026

Every platform now runs pay-as-you-go with no minimum commitment. The cost range is wider than it looks at first glance — a 300x spread between the cheapest open-source inference and frontier model access.

The real cost driver in 2026 is model selection, not platform markup. A team running GPT-5 Pro at $30/1M tokens will spend more in one day than a team running an optimized open-source model for a month at equivalent volume. Before optimizing for the cheapest platform, it's worth asking whether the frontier model is actually necessary — for many classification, summarization, and structured extraction tasks, models in the $0.50–$2 range perform comparably.

For image generation, the per-image cost sounds trivial until you run a pipeline generating hundreds of images per day. A product that generates 500 images daily at $0.04 per image runs $600/month on images alone, worth factoring in early rather than discovering in the billing dashboard.

What to look for in an AI API platform: a practical checklist

Before committing to any platform, run through these evaluation criteria against your specific requirements:

- Does it support the modalities your product needs — now and in the next 12 months?

- What's the realistic latency for your typical request size under production load, not just the median headline number?

- Does the pricing model (per-token, per-second, per-image) match your usage pattern, or will it penalize your workload type?

- What's the reliability record? Platform outages for AI APIs have real product consequences.

- Is the API compatible with the OpenAI schema, or will switching require significant re-integration work?

- What data residency and privacy commitments does the platform make — does it log requests, train on your data?

- Is the free tier sufficient to do meaningful testing at realistic request sizes before you pay?

Frequently asked questions

What is the best AI API in 2026?

It depends on what you're building. For unified multimodal access — one key for text, images, video, and audio — AI/ML API is the strongest option. For LLM-only work with provider flexibility, OpenRouter is the cleaner choice. For raw inference speed at high throughput, Fireworks AI consistently leads on latency benchmarks.

How much does an AI API cost in 2026?

Pay-as-you-go is now standard across every major platform, with no minimum commitment. Token pricing ranges from around $0.10 per 1M tokens for open-source models to $30+ per 1M for top frontier models. Image generation typically runs $0.005–0.05 per image depending on the model and resolution settings.

Is there a free AI API in 2026?

Yes, all platforms in this comparison include a free tier with starter credits. AI/ML API, OpenRouter, and Fireworks AI each offer roughly $5–10 in free usage, which is enough to test most use cases before committing to paid access.

Can I access multiple AI providers through one API?

Yes. AI/ML API provides access to models from OpenAI, Anthropic, Google, Meta, MiniMax, ByteDance, and more through a single key. OpenRouter does the same for LLMs specifically, routing across providers to let you compare cost and speed per request.

What is the fastest AI inference API in 2026?

Fireworks AI had the lowest median latency (~150ms) in our tests. Together AI (~180ms) and AIMLAPI (~200ms) followed closely. Replicate and Hugging Face vary too much by model and cold-start state to give a reliable median.