DeepSeek 4: Open-Source AI That Rivals Closed Powerhouses

What Is DeepSeek 4, and Why Does It Matter?

In a landscape dominated by closed, costly AI systems, DeepSeek 4 arrives as a genuine disruption, combining frontier-level intelligence with full model transparency and no per-token paywall.

DeepSeek AI, the research lab behind the model, has spent years quietly outpacing expectations. With DeepSeek 4, they've built what many benchmark enthusiasts are calling the most capable openly available model to date. It sits comfortably alongside — and in several benchmarks, above — GPT-4o and Claude 3.5 on reasoning, coding, and multilingual tasks.

Released in early 2026, DeepSeek 4 followed a lineage of increasingly capable models (DeepSeek-V2, DeepSeek-Coder-V2, DeepSeek-V3) that each pushed the frontier for open research. Version 4 introduced a refined Mixture-of-Experts architecture, expanded context handling, and native multimodal support via the concurrent DeepSeek 4 V2 vision variant.

For developers, this means production-grade inference without API bills scaling past your runway. For researchers, it means full model weights for fine-tuning, probing, and alignment work. For businesses, it means deploying capable AI pipelines without signing enterprise contracts or handing user data to third-party clouds.

How DeepSeek 4 Is Built: MoE, Context, and Multimodality

The engineering decisions inside DeepSeek 4 are as interesting as its output quality. Here's what's under the hood.

Mixture-of-Experts (MoE)

DeepSeek 4 packs 400 billion total parameters, but only activates approximately 100 billion per forward pass. This sparse activation pattern is the core architectural bet — it lets the model hold vastly more knowledge than a dense model while keeping inference compute practical.

128K Token Context

A 128,000-token context window means you can feed entire codebases, lengthy legal contracts, or hours of meeting transcripts into a single prompt without chunking. For RAG pipelines, this dramatically reduces the need for complex retrieval plumbing.

Multimodal Vision (V2)

The DeepSeek 4 V2 variant adds a vision encoder, allowing image and diagram understanding alongside text. This unlocks document understanding, screenshot analysis, and visual QA without switching to a separate model endpoint.

Training Data and Scale

DeepSeek 4 was trained on a curated multi-trillion token dataset spanning code repositories, scientific literature, multilingual web text, and synthetic reasoning chains. The training recipe incorporated supervised fine-tuning (SFT) followed by reinforcement learning from human feedback (RLHF) and direct preference optimization (DPO) for alignment.

Quantization options include 4-bit (GPTQ/AWQ) for consumer GPUs and 8-bit for balanced quality-and-speed deployments. Full BF16 precision is available for research-grade infrastructure.

Advanced RAG Integration

One of the less-discussed wins in DeepSeek 4 is how well it handles retrieval-augmented generation out of the box. The model was specifically trained to cite sources, follow structured context injections, and gracefully decline when retrieved context doesn't contain the answer, reducing hallucination rates in RAG pipelines considerably compared to earlier generations.

This makes it a strong fit for knowledge-base assistants, internal documentation search, and customer support agents where factual grounding matters more than generative flair.

Spec Comparison: DeepSeek 4 vs. the Field

DeepSeek 4 Benchmarks: The Numbers Speak

Independent evaluations place DeepSeek 4 at or near the top of every major benchmark category. Here's a candid look at what it actually scores, and where it earns those scores.

Real-World Speed & Cost Efficiency

500+

tokens/sec on a single A100 (8-bit quantized)

30–50

tokens/sec on a 2x RTX 4090 with 4-bit quant

~$0.18

per million tokens via the official DeepSeek API

Running DeepSeek 4 at 4-bit quantization on consumer-grade dual GPU setups brings frontier-class inference within reach of indie developers and academic labs. That changes the competitive dynamic significantly for anyone who's been pricing GPT-4o at scale.

What DeepSeek 4 Can Actually Do

Strong benchmarks are one thing. Here's where DeepSeek 4 earns its reputation in real workflows.

AI Agents

Long-horizon planning, tool-use reasoning, and instruction-following make DeepSeek 4 a strong backbone for autonomous agent pipelines built on frameworks like LangChain, AutoGen, or CrewAI.

Enterprise RAG

Its 128K context and grounded citation behavior reduce hallucinations in retrieval pipelines. It's particularly suited to internal knowledge bases, legal document analysis, and compliance tooling.

Code Generation

With a 95.1% HumanEval score, DeepSeek 4 produces clean, runnable code across Python, JavaScript, Rust, Go, and SQL. It also handles refactoring, test generation, and code review fluently.

Multilingual Apps

DeepSeek AI's roots in Chinese-language research give DeepSeek 4 exceptional bilingual performance. It's among the highest-scoring models for Chinese-English translation and cross-lingual reasoning tasks.

Coding Example: DeepSeek 4 in Action

Here's a sample prompt and the model's output for a common developer task, building a rate-limiting middleware in Python:

# User prompt: "Write a Redis-backed rate limiter middleware for a FastAPI app. Limit each IP to 100 requests per minute. Return 429 on breach." # DeepSeek 4 output (condensed): import redis from fastapi import Request, HTTPException from starlette.middleware.base import BaseHTTPMiddleware class RateLimiterMiddleware(BaseHTTPMiddleware): def __init__(self, app, redis_url: str, limit: int = 100, window: int = 60): super().__init__(app) self.r = redis.from_url(redis_url) self.limit = limit self.window = window async def dispatch(self, request: Request, call_next): key = f"rl:{request.client.host}" current = self.r.incr(key) if current == 1: self.r.expire(key, self.window) if current > self.limit: raise HTTPException(status_code=429, detail="Rate limit exceeded") return await call_next(request)Output generated in ~1.2 seconds on the DeepSeek API. No additional prompting required to get production-ready code.

DeepSeek 4 vs. Competitors

Let's be specific about where DeepSeek 4 wins, where it's competitive, and where other models still have edges worth knowing.

vs Llama 3.1 405B

MoE Efficiency Wins

Llama 3.1 405B is a dense model — every inference call activates all 405 billion parameters. DeepSeek 4's MoE design activates only ~100B at a time, meaning you get comparable or better quality at a fraction of the compute cost.

For teams self-hosting, this translates directly to fewer GPUs needed. DeepSeek 4 also outscores Llama 3.1 405B on MATH and HumanEval.

vs Qwen 2.5 72B

Scale and Chinese NLP

Qwen 2.5 is an excellent model for its size class, but DeepSeek 4's parameter scale gives it a consistent edge on complex reasoning tasks. Both models are strong in Chinese-language understanding — DeepSeek 4's slight edge comes from its broader training coverage and reinforcement-learning alignment.

Qwen 2.5 wins on raw serving cost at smaller scale.

Quick Comparison: Pros and Cons

What to Know Before You Deploy

No model is perfect, and intellectual honesty about limitations matters for building trustworthy systems.

Hallucinations in Edge Cases

DeepSeek 4 performs exceptionally on well-represented topics but will still confidently confabulate in narrow expert domains, very recent events, and niche technical areas outside its training distribution. Always implement validation layers for factual claims in production.

Bias and Cultural Coverage

Like all large models, DeepSeek 4 reflects the biases of its training data. Its bilingual Chinese-English strength means its coverage of other languages and cultures may be uneven. Test extensively on your target language and population before shipping.

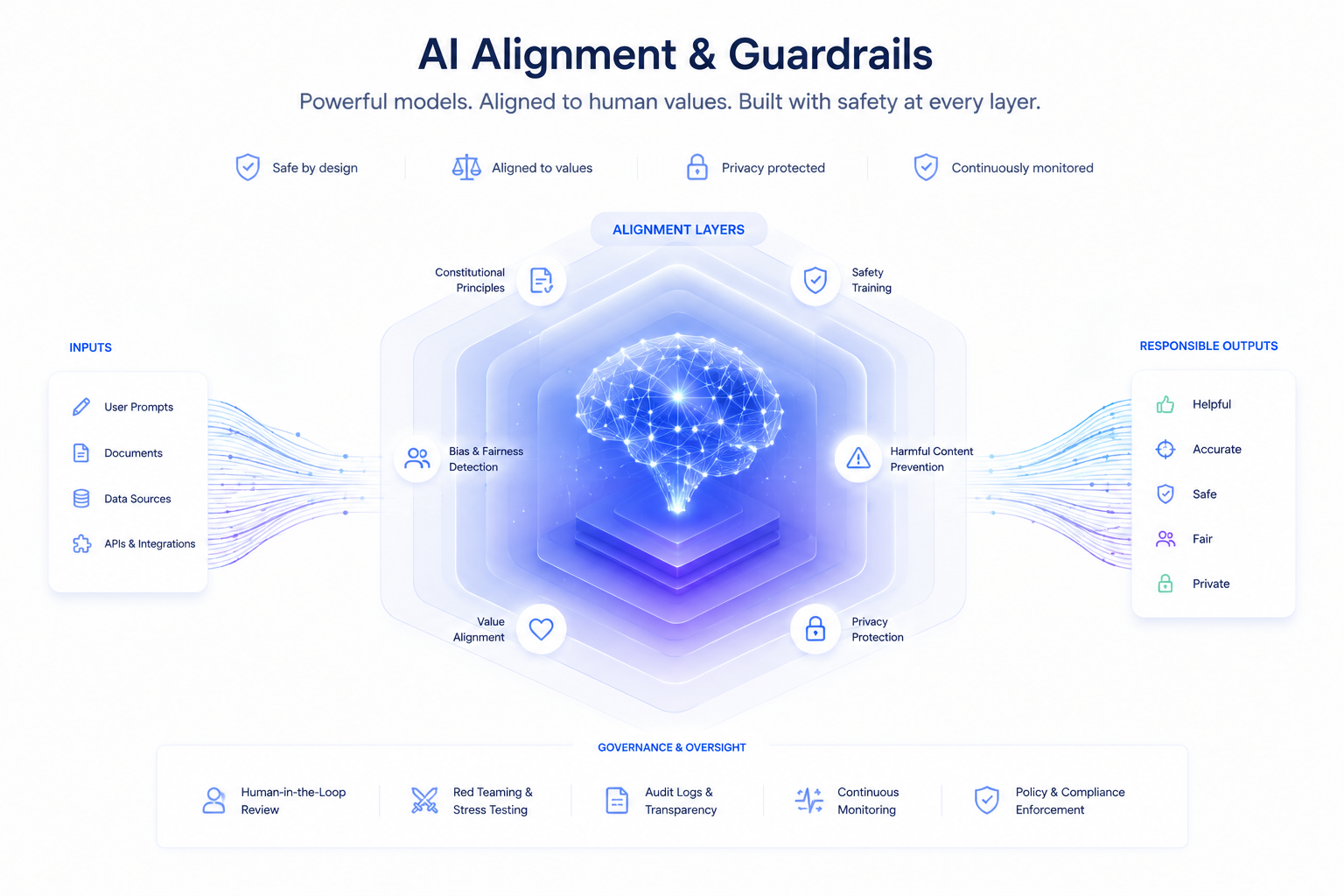

Safety Alignment

DeepSeek 4 was aligned with a combination of RLHF and DPO. The base weights are available for research but the unaligned base model should not be deployed in consumer-facing contexts without additional safety fine-tuning or guardrails.

Conclusion

DeepSeek 4 delivers competitive benchmark performance across reasoning, coding, mathematics, and multilingual tasks. It does so with full weight transparency, a commercial-friendly license, and an API cost structure that genuinely democratizes access for developers and organizations that couldn't justify frontier model pricing at scale.

Is it perfect? No. Hallucinations persist in edge cases, the vision variant is still maturing, and self-hosting at full precision remains a hardware challenge. But the trajectory is clear, and the community building around DeepSeek 4 is already generating fine-tunes, tooling integrations, and deployment guides at a pace that suggests staying power.

For developers building AI-powered products, researchers probing model behavior, and businesses evaluating where to anchor their AI infrastructure — DeepSeek 4 deserves serious evaluation. The barriers to trying it are low and the upside is real.

Don't miss 2026's top open model – deploy DeepSeek 4 today on aimlapi.com. Instant playground, enterprise-ready. Launch with aimlapi now