Gemma 4 – Google DeepMind’s Most Powerful Open-Weight AI Model Family

What is Gemma 4?

Gemma 4 is Google DeepMind's fourth generation of open-weight language models, announced and released on April 2, 2026. It's built from the same underlying research as Gemini 3, which means you're essentially getting a distilled version of Google's flagship proprietary model — downloadable, runnable on your own hardware, and free to use commercially.

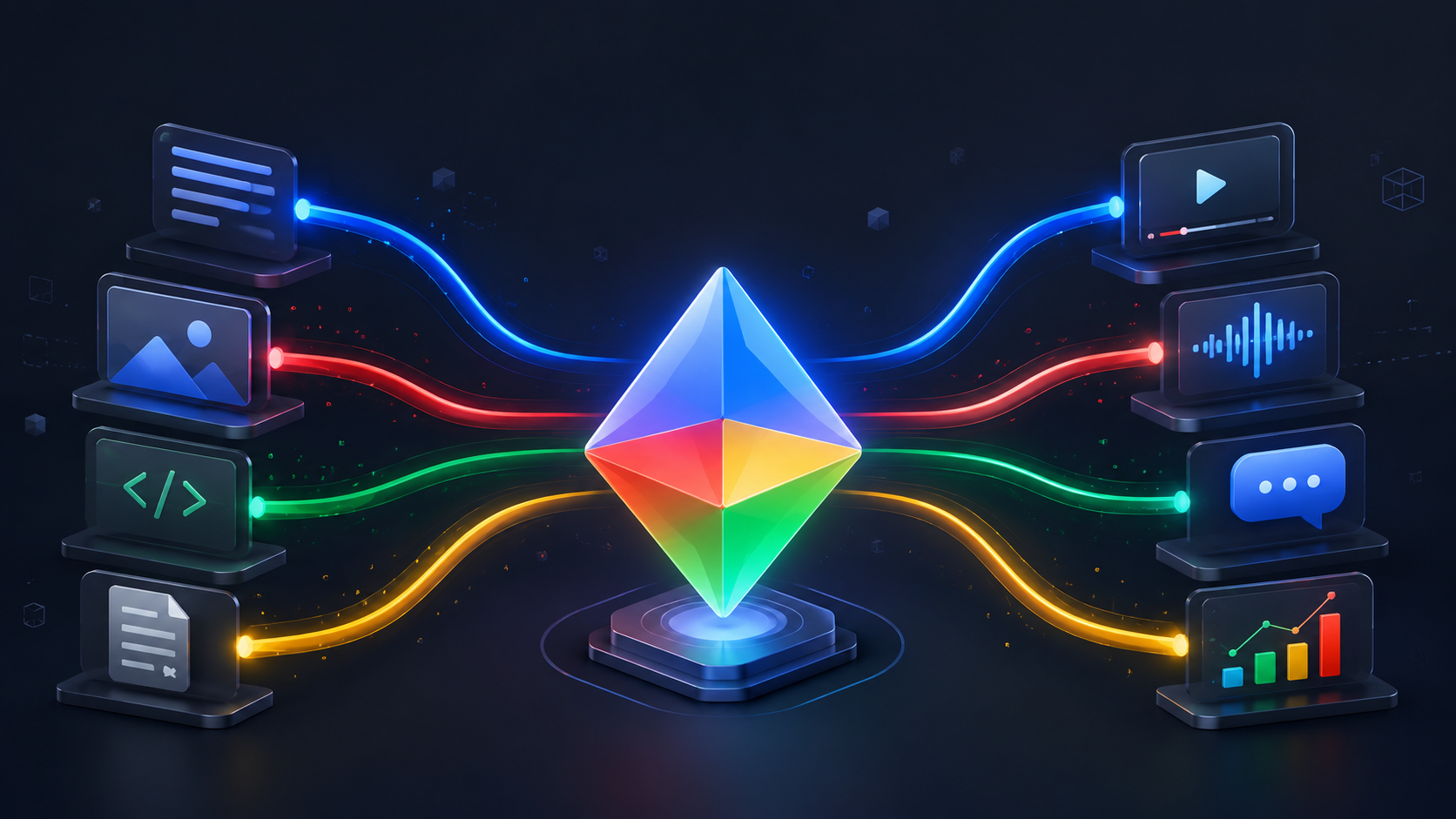

What sets this release apart from previous Gemma generations isn't just the number jump. It's a fundamental rethinking of what an open model should be capable of. Every size in the family — from the tiny E2B that fits on a phone to the 31B dense model for workstations — handles text, images, and code natively. The two smaller models go further with native audio input. And every model in the family supports structured function calling and native system prompts, making them production-ready for agentic workflows out of the box.

Since the first Gemma release, developers have downloaded the family over 400 million times and built more than 100,000 community variants — the Gemmaverse. Gemma 4 is the answer to what that community asked for next.

The previous Gemma license had restrictions that made legal teams nervous, particularly in enterprise environments. Gemma 4 ships under Apache 2.0 for the first time in the family's history. No monthly active user caps. No acceptable-use policy enforcement. Full commercial freedom.

Gemma 4 model lineup & hardware targets

The "E" in E2B and E4B stands for effective parameters. These models use Per-Layer Embeddings (PLE) — a secondary embedding table used for fast lookup at every decoder layer. The technique inflates the parameter count on paper without adding proportional inference cost.

Gemma 4 benchmark performance

The benchmark numbers here aren't incremental improvements. On some tasks, particularly math and agentic tool use, the jump from Gemma 3 to Gemma 4 is more than 4×. The 31B model currently holds the #3 position among all open models on the Arena AI text leaderboard.

Key benchmarks: Gemma 4 31B vs prior state-of-the-art

Gemma 4 vs Gemma 3 vs Llama 4 vs Qwen 3.5

The open-weight model landscape in 2026 is genuinely competitive. Here's where Gemma 4 sits relative to the main alternatives developers consider.

Head-to-head: key differentiators

The Llama 4 question

Llama 4 Scout offers a 10M token context window, genuinely useful for whole-codebase ingestion. If that's your specific bottleneck, it's worth benchmarking. But for the small-to-medium size tier, Gemma 4 leads on reasoning, coding, and science benchmarks while also running on edge hardware that Llama 4 doesn't target. The licensing situation also differs: Llama 4 uses a community license with Meta's acceptable-use policy, while Gemma 4's Apache 2.0 is more permissive for sovereign and commercial deployments.

The Qwen 3.5 question

Qwen 3.5 has a massive 397B flagship model that Gemma 4 doesn't compete with at the top. But at the 26B–32B tier, Gemma 4 31B scores 85.2% on MMLU Pro versus Qwen 3.5 27B's reported numbers. Both use Apache 2.0. The practical differentiator is on-device tooling: Google's LiteRT-LM, Android integration, and Qualcomm/MediaTek partnerships give Gemma 4 a significantly better edge deployment story.

Gemma 4 multimodal capabilities

This is the first Gemma generation where every single model in the lineup handles multimodal input natively — not as a fine-tuned add-on, but as a core architectural feature.

Image understanding

All four models. Variable aspect ratio & resolution. Configurable token budgets (70–1,120 per image) to trade detail for speed.

Video comprehension

26B and 31B models. Up to 60 seconds at 1 fps. Covers scene understanding, temporal reasoning, and chart reading across frames.

Native audio input

E2B and E4B only. USM-style conformer encoder. Up to 30 seconds of audio. Handles speech recognition and audio Q&A directly on-device — no cloud call required.

OCR & document parsing

Strong performance on reading small text in images, scanned documents, charts, and screenshots, particularly at higher visual token budgets (560–1,120).

Chart & visual data

Excels at reading dashboards, bar charts, pie charts, and data tables from screenshots, including complex multi-chart business dashboards.

Bounding box output

Can output bounding box coordinates for UI element detection, enabling browser automation, screen-parsing agents, and accessibility tooling.

Hardware requirements at a glance

Run Gemma 4 via API — no GPU required

Access Gemma 4 instantly through AI/ML API. No setup, no hardware costs. Pay per token.

Get API access →

Gemma 4 Apache 2.0 license: what changes for you

Previous Gemma releases shipped under a custom Google license. It had restrictions on commercial use, content policies, and monthly active user thresholds. Enterprise legal teams flagged it, many organizations defaulted to Mistral or Qwen instead.

Gemma 4 ships under Apache 2.0. Here's what that concretely unlocks:

For enterprise teams building products on open models, the licensing clarity matters as much as the benchmark numbers. Apache 2.0 means you can evaluate, prototype, and ship to production without a legal review cycle.

Gemma 4 architecture deep-dive

Under the hood, Gemma 4 introduces several design decisions worth knowing if you're deploying, fine-tuning, or building on top of these models.

Alternating attention layers

Layers alternate between local sliding-window attention (512–1,024 tokens) and global full-context attention. Local layers handle nearby token relationships efficiently; global layers do long-range reasoning. This lets the model run a 256K context window without the memory overhead of full attention at every layer.

Dual RoPE (Proportional RoPE)

Sliding-window layers use standard rotary position embeddings (RoPE). Global layers use Proportional RoPE (p-RoPE), which scales positional encodings relative to the sequence length. This is what enables stable quality at 256K tokens — a known weak point for models that simply extend standard RoPE.

Shared KV cache

The final N transformer layers reuse key/value tensors from earlier layers. The practical effect: meaningfully lower memory consumption and faster inference without a measurable quality penalty.

Vision encoder

A learned 2D position encoder uses multidimensional RoPE to preserve original image aspect ratios. The visual token budget is configurable — 70, 140, 280, 560, or 1,120 tokens per image — so you can tune the detail/speed tradeoff for your use case. OCR and document parsing benefit from higher budgets; video frame understanding typically uses lower ones.

USM audio encoder (E2B and E4B)

The same conformer architecture used in Gemma-3n handles up to 30 seconds of audio input. It supports both speech recognition and audio question answering, running entirely on-device without any cloud call.

Per-Layer Embeddings (E2B and E4B)

The edge models use a technique called PLE — a parallel embedding table that feeds an additional signal into every decoder layer. It adds to the stated parameter count but uses far less compute during inference than the parameter number implies. This is why E2B runs in under 1.5 GB of memory while delivering capability well above what its size suggests.

Gemma 4 release date & family timeline

People also ask about Gemma 4

What is the Gemma 4 release date?

Google DeepMind released Gemma 4 on April 2, 2026. All four model sizes (E2B, E4B, 26B MoE, and 31B) were released simultaneously alongside pre-trained and instruction-tuned variants.

Is Gemma 4 multimodal?

Yes, all four Gemma 4 models support image input natively. The two larger models (26B and 31B) also process video up to 60 seconds. The two smaller models (E2B and E4B) add native audio input via a conformer encoder that handles speech recognition on-device.

Can I run Gemma 4 on my laptop or phone?

Yes. The E2B model runs in under 1.5 GB of memory, targeting current-generation smartphones including Android devices with Qualcomm Dragonwing or MediaTek Dimensity NPUs. The E4B targets 8 GB laptops. The 26B MoE runs on a 24 GB GPU with Q4 quantization. All models have day-zero support in Ollama, llama.cpp, LM Studio, and MLX for Apple Silicon.

What license does Gemma 4 use?

Gemma 4 uses the Apache 2.0 license — the first time in the Gemma family's history. This means no monthly active user limits, no acceptable-use policy restrictions, and full freedom for commercial, enterprise, and sovereign AI deployments. Previous Gemma generations used a restrictive custom license.

How does Gemma 4 perform on benchmarks?

The Gemma 4 31B currently ranks #3 among all open models on the Arena AI text leaderboard (ELO ~1452). On AIME 2026 (competitive math), it scores 89.2% — up from Gemma 3 27B's 20.8%. On GPQA Diamond (graduate-level science), it scores 84.3% versus Gemma 3's 42.4%. On τ2-bench (agentic tool use), it scores 86.4% versus Gemma 3's 6.6%.

%201.png)