1M

1.56

9.36

Chat

Active

Qwen3.5 Plus

A frontier-class hosted model built for the agentic AI era. One million tokens of context. Native vision-language architecture. Adaptive reasoning at industrial scale.

The combination of long context, native vision, and agentic reasoning makes Qwen3.5 Plus particularly well-suited for the following real-world deployment scenarios.

Unlike models that bolt on vision as an afterthought, Qwen3.5 is a native vision-language model trained end-to-end on trillions of text, image, and video tokens simultaneously. This early-fusion approach gives Qwen3.5 Plus a qualitative advantage in tasks requiring deep semantic integration of text and visual content.

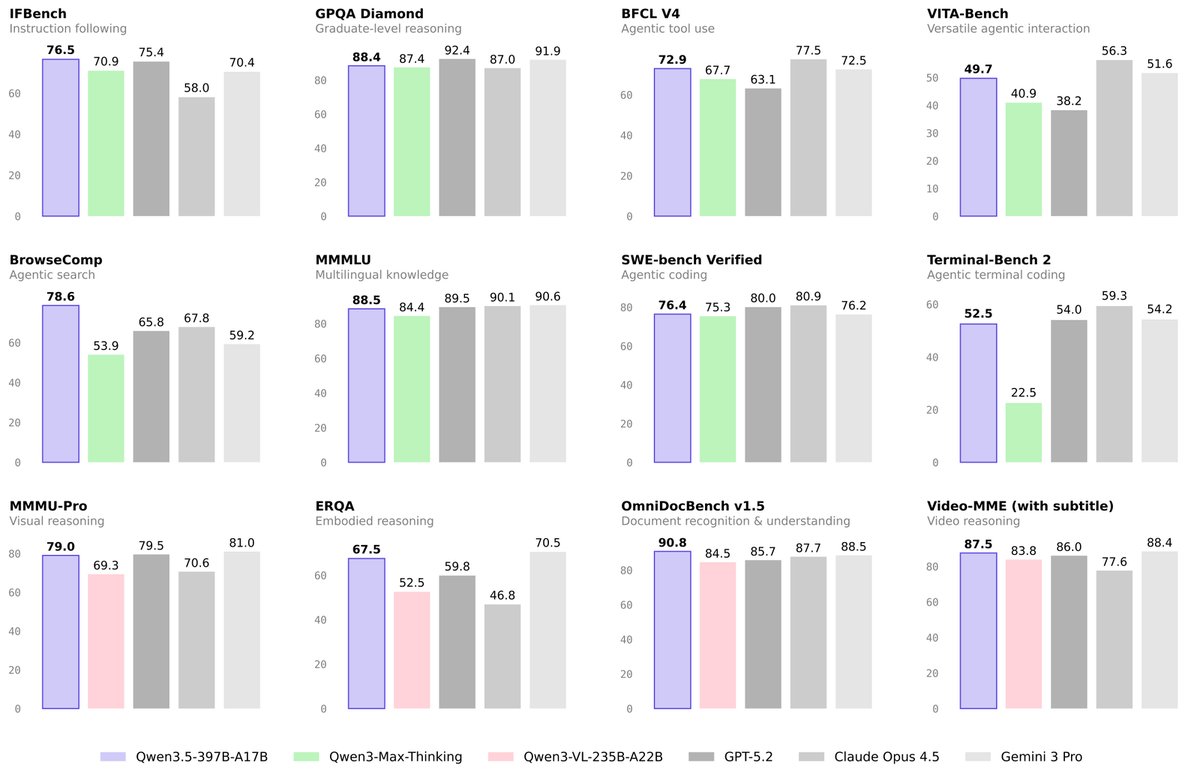

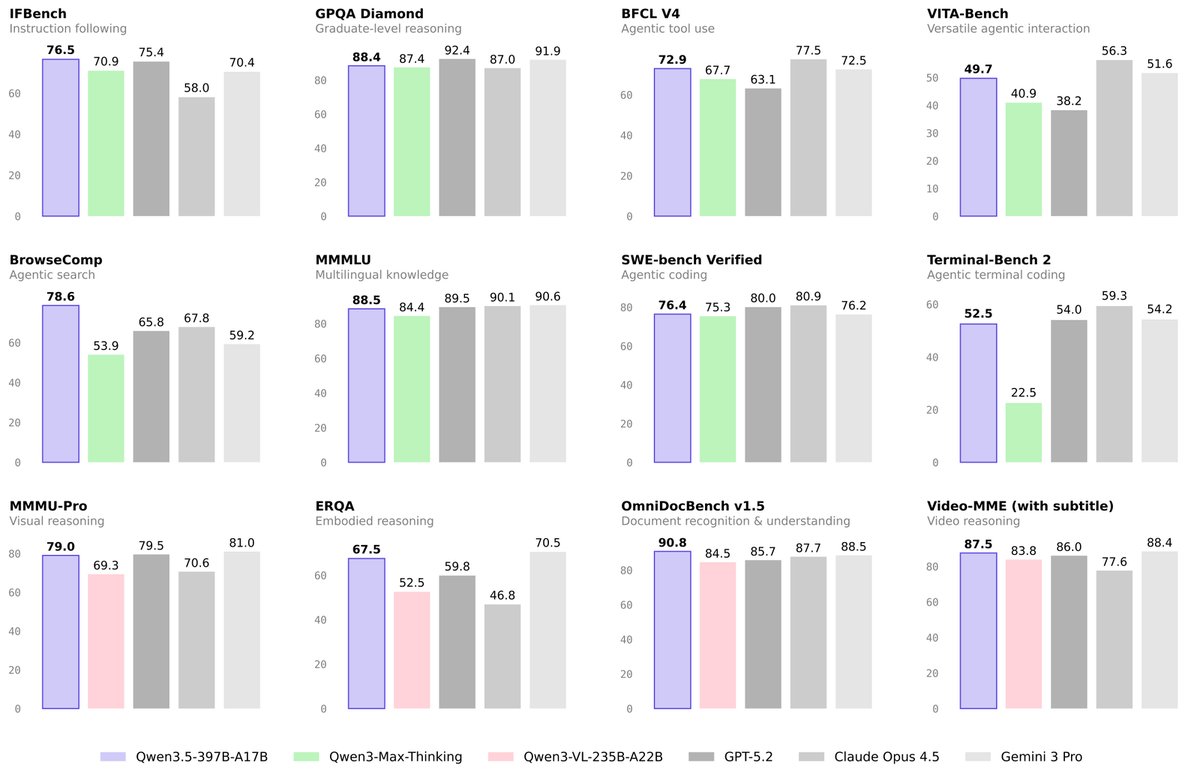

Benchmarks show the 3.5 series achieving parity with frontier-class models from OpenAI and Anthropic across reasoning, coding, agentic tasks, and multimodal understanding, while delivering significant efficiency improvements over the prior generation.

Eight headline capabilities that distinguish Qwen3.5 Plus from the broader model landscape as of early 2026.

Qwen3.5 Plus supports an extended context window of up to 1 million tokens, compared to 256K tokens for the base Qwen3.5 model. This enables single‑session analysis of large codebases, multi‑day chat logs, legal corpora, or multi‑document research workflows without manual chunking.

Exclusive to Qwen3.5 Plus, the Auto mode intelligently decides whether to invoke extended reasoning, run a search query, call a code interpreter, or respond directly, matching compute expenditure to actual task complexity without any user-level configuration.

Because the model was trained on UI screenshots from mobile and desktop interfaces, Qwen3.5 Plus can perceive, interpret, and act on graphical interfaces, clicking buttons, filling forms, navigating software environments autonomously across both Android and desktop operating systems.

The combination of long context, native vision, and agentic reasoning makes Qwen3.5 Plus particularly well-suited for the following real-world deployment scenarios.

Ingest entire annual reports, legal contracts, or technical manuals in a single API call. Extract structured information, cross-reference clauses, and generate executive summaries without chunking or RAG orchestration.

Load an entire repository into context for debugging, refactoring, dependency analysis, or test generation. The 1M token window accommodates even large enterprise monorepos without losing cross-file context.

Jointly interpret charts, tables, images, and text from research papers. Suitable for literature review automation, data extraction from figures, and cross-document synthesis in scientific and medical domains.

Qwen3.5 Plus is especially strong when you need a single hosted model that can handle very long contexts, multimodal reasoning, and tool‑augmented workflows without prohibitive cost. For large‑scale copilots, document analytics, and production chat agents, it offers a compelling mix of performance, flexibility, and pricing versus other frontier‑class LLMs.

Unlike models that bolt on vision as an afterthought, Qwen3.5 is a native vision-language model trained end-to-end on trillions of text, image, and video tokens simultaneously. This early-fusion approach gives Qwen3.5 Plus a qualitative advantage in tasks requiring deep semantic integration of text and visual content.

Benchmarks show the 3.5 series achieving parity with frontier-class models from OpenAI and Anthropic across reasoning, coding, agentic tasks, and multimodal understanding, while delivering significant efficiency improvements over the prior generation.

Eight headline capabilities that distinguish Qwen3.5 Plus from the broader model landscape as of early 2026.

Qwen3.5 Plus supports an extended context window of up to 1 million tokens, compared to 256K tokens for the base Qwen3.5 model. This enables single‑session analysis of large codebases, multi‑day chat logs, legal corpora, or multi‑document research workflows without manual chunking.

Exclusive to Qwen3.5 Plus, the Auto mode intelligently decides whether to invoke extended reasoning, run a search query, call a code interpreter, or respond directly, matching compute expenditure to actual task complexity without any user-level configuration.

Because the model was trained on UI screenshots from mobile and desktop interfaces, Qwen3.5 Plus can perceive, interpret, and act on graphical interfaces, clicking buttons, filling forms, navigating software environments autonomously across both Android and desktop operating systems.

The combination of long context, native vision, and agentic reasoning makes Qwen3.5 Plus particularly well-suited for the following real-world deployment scenarios.

Ingest entire annual reports, legal contracts, or technical manuals in a single API call. Extract structured information, cross-reference clauses, and generate executive summaries without chunking or RAG orchestration.

Load an entire repository into context for debugging, refactoring, dependency analysis, or test generation. The 1M token window accommodates even large enterprise monorepos without losing cross-file context.

Jointly interpret charts, tables, images, and text from research papers. Suitable for literature review automation, data extraction from figures, and cross-document synthesis in scientific and medical domains.

Qwen3.5 Plus is especially strong when you need a single hosted model that can handle very long contexts, multimodal reasoning, and tool‑augmented workflows without prohibitive cost. For large‑scale copilots, document analytics, and production chat agents, it offers a compelling mix of performance, flexibility, and pricing versus other frontier‑class LLMs.