128K

0.294

0.441

Chat

Active

DeepSeek-V3.1 Release

DeepSeek V3.1 is a refined upgrade of the DeepSeek hybrid reasoning model, featuring enhanced tool integration, improved language consistency, and better performance across benchmarks.

Its open-source license and strong capabilities make it ideal for advanced AI applications in coding, research, and agent-based workflows.

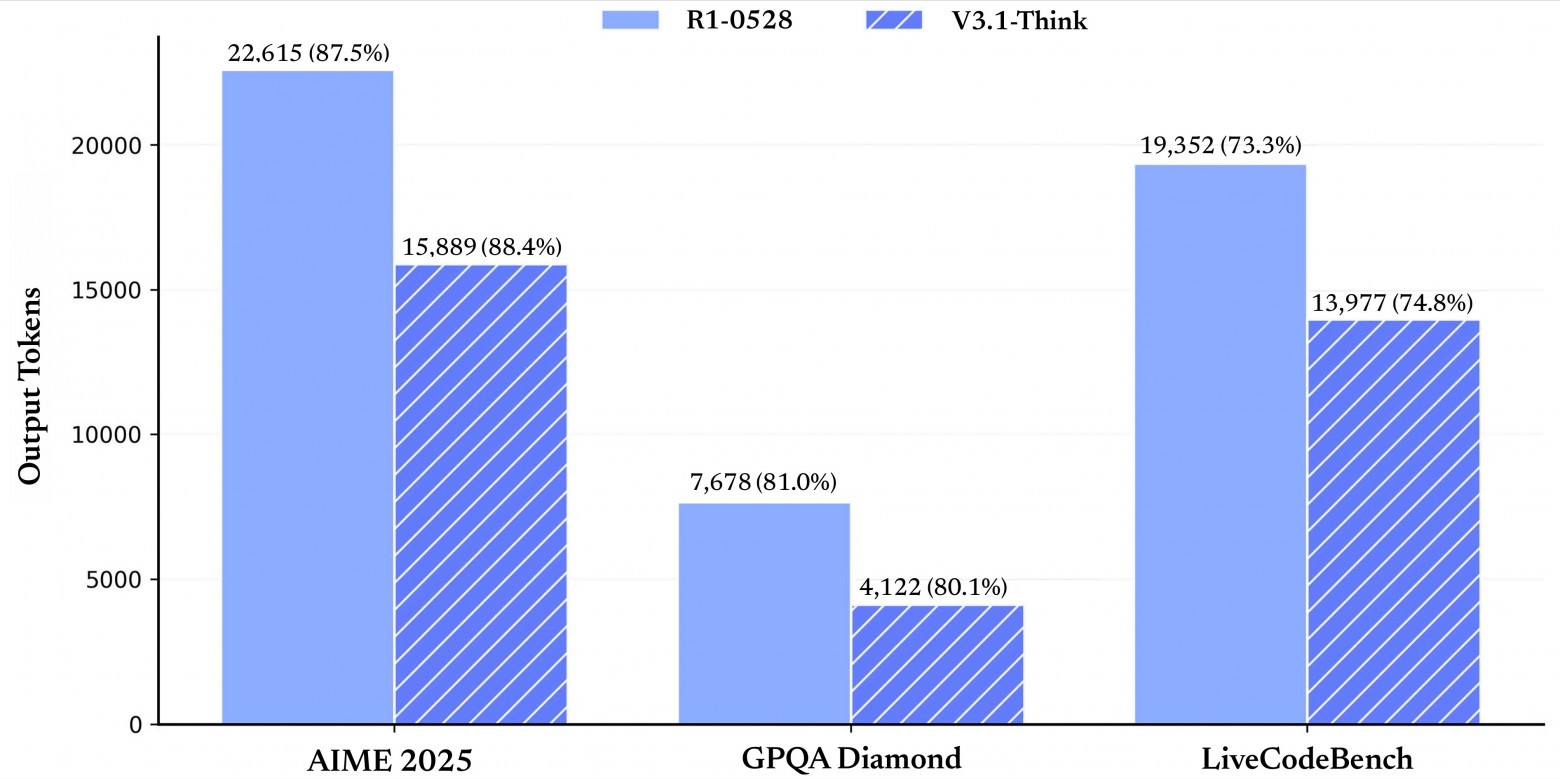

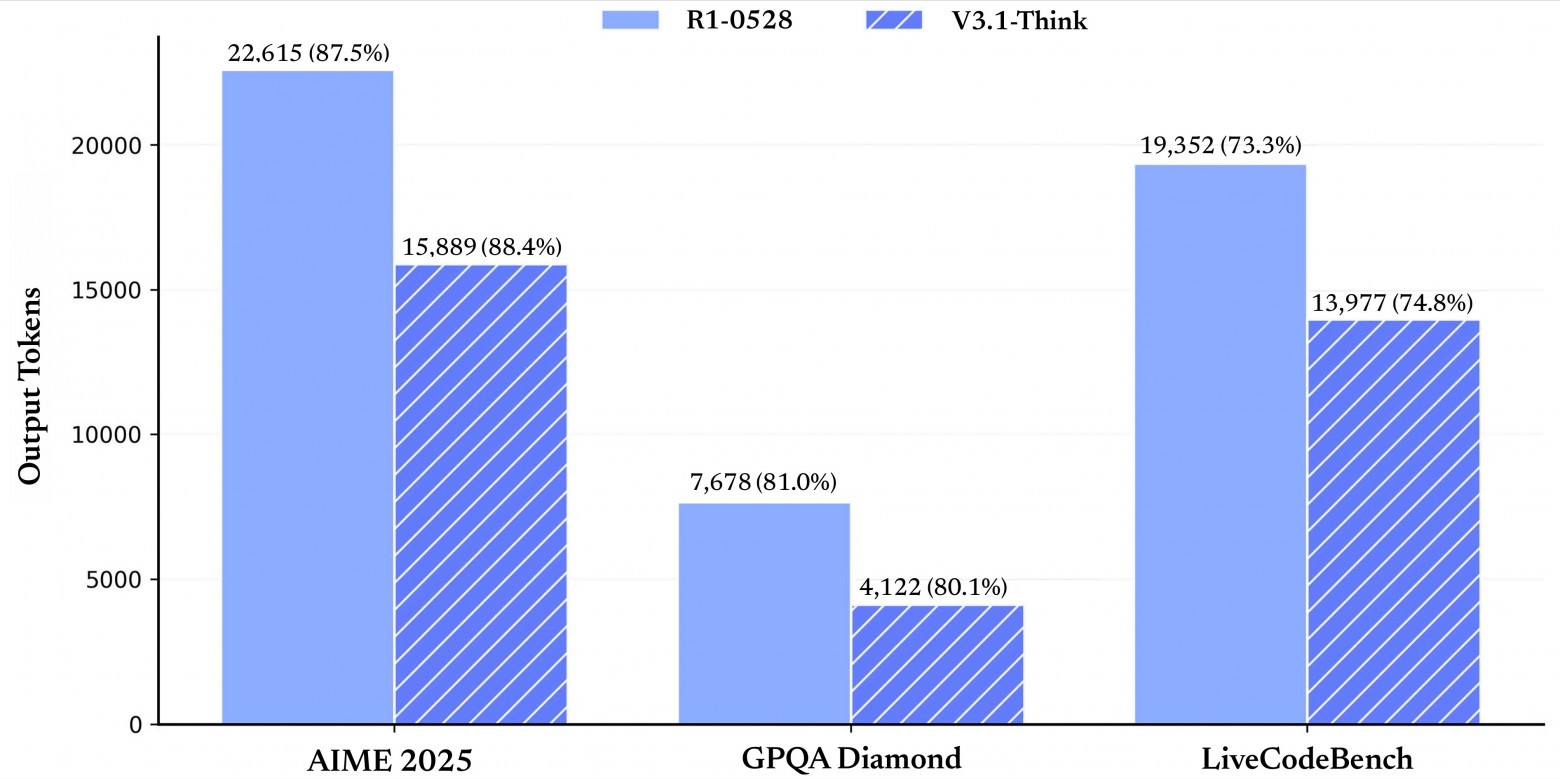

DeepSeek V3.1 represents the latest evolution of DeepSeek’s hybrid reasoning model, enhancing the original V3.1 foundation with improved language consistency, agent capabilities, and reasoning efficiency. It supports both thinking and non-thinking modes to optimize performance for diverse use cases, from fast interactive responses to complex, multi-step reasoning in coding and search agents. This update empowers developers and enterprises with a more stable, reliable AI model tailored for advanced research, software development, and agentic workflows.

DeepSeek V3.1 supports an extended input context window of up to 128K tokens, with refined tokenization designed for multimodal inputs combining text and high-resolution image features. This extended context capacity allows the model to engage with highly complex, multi-source documents and conversations in a single pass. Output tokens scale dynamically up to 50,000 tokens optimized for efficient real-time interaction, including narrative generation and detailed image captioning.

Speed & Latency: DeepSeek V3.1 Reasoner incorporates improved sparse attention mechanisms and optimized memory management, achieving inference latencies approximately 30% lower than DeepSeek-V3.0 under equivalent hardware conditions.

Accuracy: Demonstrates superior few-shot and zero-shot performance across benchmarks in visual question answering, document summarization, and legal reasoning tasks, with factual consistency metrics.

Multilingual Support: Expands language portfolio to over 90 languages, offering high-fidelity translation and culturally nuanced context comprehension beyond previous releases.

• 1М input tokens: $0.294

• 1М output tokens: $0.441

DeepSeek V3.1 represents the latest evolution of DeepSeek’s hybrid reasoning model, enhancing the original V3.1 foundation with improved language consistency, agent capabilities, and reasoning efficiency. It supports both thinking and non-thinking modes to optimize performance for diverse use cases, from fast interactive responses to complex, multi-step reasoning in coding and search agents. This update empowers developers and enterprises with a more stable, reliable AI model tailored for advanced research, software development, and agentic workflows.

DeepSeek V3.1 supports an extended input context window of up to 128K tokens, with refined tokenization designed for multimodal inputs combining text and high-resolution image features. This extended context capacity allows the model to engage with highly complex, multi-source documents and conversations in a single pass. Output tokens scale dynamically up to 50,000 tokens optimized for efficient real-time interaction, including narrative generation and detailed image captioning.

Speed & Latency: DeepSeek V3.1 Reasoner incorporates improved sparse attention mechanisms and optimized memory management, achieving inference latencies approximately 30% lower than DeepSeek-V3.0 under equivalent hardware conditions.

Accuracy: Demonstrates superior few-shot and zero-shot performance across benchmarks in visual question answering, document summarization, and legal reasoning tasks, with factual consistency metrics.

Multilingual Support: Expands language portfolio to over 90 languages, offering high-fidelity translation and culturally nuanced context comprehension beyond previous releases.

• 1М input tokens: $0.294

• 1М output tokens: $0.441