200K

1.3

4.16

Chat

Active

GLM-5

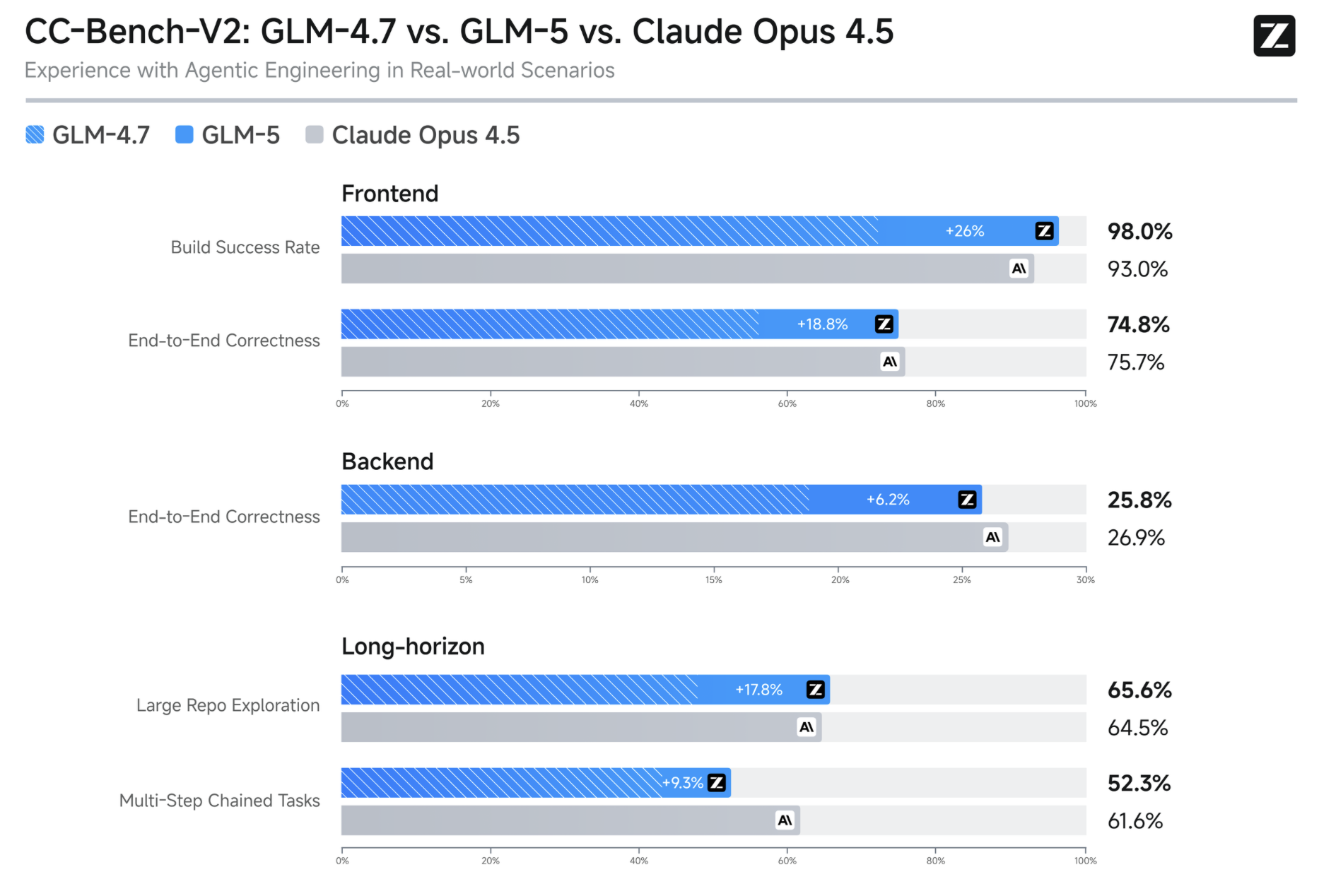

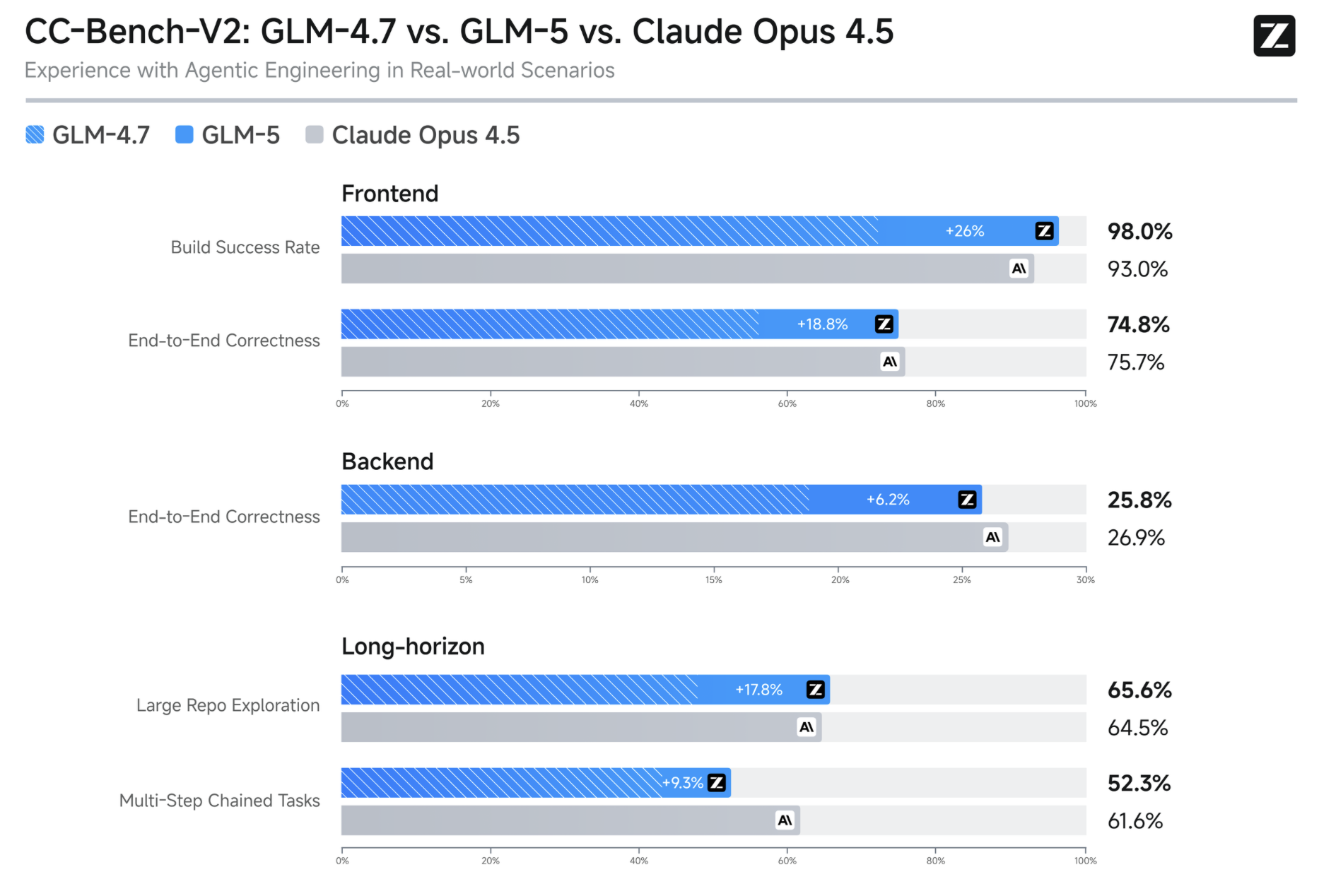

Designed to power modern AI products, GLM-5 API delivers strong performance across text generation, structured outputs, code understanding, and complex analytical tasks.

With optimized inference efficiency and enterprise-ready reliability, GLM-5 is built for production environments that demand accuracy, speed, and cost transparency.

GLM-5 is an advanced LLM developed to support complex reasoning, long-context understanding, and multilingual text generation. It combines deep semantic comprehension with strong instruction following, enabling developers to build reliable AI assistants, automation workflows, coding copilots, and knowledge systems. The model is optimized for both conversational AI and structured outputs, making it suitable for chat interfaces, data extraction pipelines, agentic systems, and enterprise integrations.

GLM-5 is optimized for stable inference and predictable behavior in production environments. It demonstrates strong instruction adherence, reduced hallucination patterns compared to earlier-generation models, and improved contextual retention in extended conversations.

GLM-5 is built to handle analytical tasks, multi-step reasoning, and structured data generation. It can reliably produce JSON outputs, formatted reports, and schema-aligned responses, making it ideal for backend integrations and automation tools.

GLM-5 supports extended context windows, allowing it to process large documents, maintain conversation continuity, and reason over complex multi-paragraph inputs. This makes it well-suited for research assistants, legal document analysis, and enterprise knowledge management systems.

GLM-5 performs effectively in code completion, debugging assistance, and technical documentation generation. It supports modern programming workflows and can be integrated into developer tools, CI/CD pipelines, and AI-powered IDE assistants.

Create high-quality blog posts, technical documentation, marketing copy, and multilingual content with strong consistency and structure.

Leverage structured output capabilities for data extraction, document parsing, and transformation into machine-readable formats.

Integrate GLM-5 into engineering workflows to accelerate development, improve code quality, and streamline debugging processes.

Use GLM-5 as the reasoning core for AI agents that perform multi-step tasks, decision-making operations, and contextual execution flows.

GLM-5 is an advanced LLM developed to support complex reasoning, long-context understanding, and multilingual text generation. It combines deep semantic comprehension with strong instruction following, enabling developers to build reliable AI assistants, automation workflows, coding copilots, and knowledge systems. The model is optimized for both conversational AI and structured outputs, making it suitable for chat interfaces, data extraction pipelines, agentic systems, and enterprise integrations.

GLM-5 is optimized for stable inference and predictable behavior in production environments. It demonstrates strong instruction adherence, reduced hallucination patterns compared to earlier-generation models, and improved contextual retention in extended conversations.

GLM-5 is built to handle analytical tasks, multi-step reasoning, and structured data generation. It can reliably produce JSON outputs, formatted reports, and schema-aligned responses, making it ideal for backend integrations and automation tools.

GLM-5 supports extended context windows, allowing it to process large documents, maintain conversation continuity, and reason over complex multi-paragraph inputs. This makes it well-suited for research assistants, legal document analysis, and enterprise knowledge management systems.

GLM-5 performs effectively in code completion, debugging assistance, and technical documentation generation. It supports modern programming workflows and can be integrated into developer tools, CI/CD pipelines, and AI-powered IDE assistants.

Create high-quality blog posts, technical documentation, marketing copy, and multilingual content with strong consistency and structure.

Leverage structured output capabilities for data extraction, document parsing, and transformation into machine-readable formats.

Integrate GLM-5 into engineering workflows to accelerate development, improve code quality, and streamline debugging processes.

Use GLM-5 as the reasoning core for AI agents that perform multi-step tasks, decision-making operations, and contextual execution flows.