126K

0.91

3.64

Chat

Active

Qwen3 VL 32B Instruct

Its optimized instruction-following makes it ideal for platforms prioritizing enhanced user experience in visual data understanding, creative content generation, and interactive visual assistance.

Qwen3 VL 32B Instruct can be seamlessly integrated into multimodal applications requiring precise image-text interaction.

Qwen3 VL 32B Instruct is a specialized vision-language large model designed for instruction-following in tasks involving image description, visual dialogue, and content generation. It is a “non-thinking only” version optimized to excel in interpreting visual inputs and generating coherent, context-aware textual output in response to visual content and instructions.

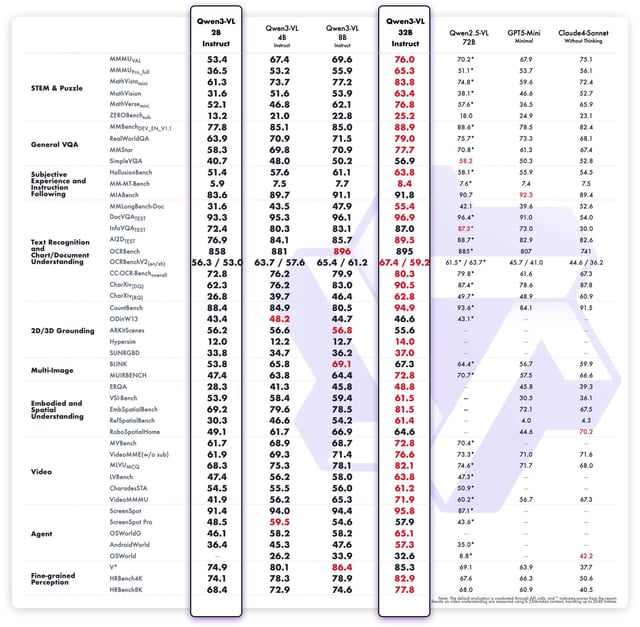

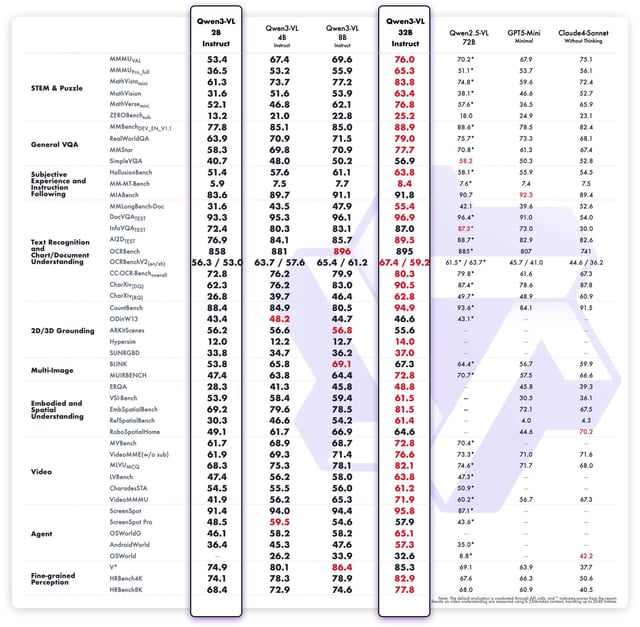

vs Qwen3 VL 32B Base: Instruct version is fine-tuned for better instruction adherence and generates more context-relevant and accurate descriptions, whereas the base targets general multimodal understanding.

vs OpenAI GPT-4 (with vision): Qwen3 VL 32B Instruct is optimized specifically for instruction-following and visual content generation with fewer hallucinations on visual inputs; GPT-4 offers broader general AI capabilities but can be less specialized in visual instruction adherence.

vs Claude 4.5 Visual: Qwen3 VL 32B Instruct provides stronger image description and dialogue quality with a focus on visual instructions, while Claude often excels in text-based reasoning and larger context management but with slightly less visual specialization.

vs DeepSeek V3.1: Qwen3 VL 32B Instruct outperforms in detailed content generation and visualization tasks, whereas DeepSeek focuses more on semantic image search and retrieval functionality.

Qwen3 VL 32B Instruct is a specialized vision-language large model designed for instruction-following in tasks involving image description, visual dialogue, and content generation. It is a “non-thinking only” version optimized to excel in interpreting visual inputs and generating coherent, context-aware textual output in response to visual content and instructions.

vs Qwen3 VL 32B Base: Instruct version is fine-tuned for better instruction adherence and generates more context-relevant and accurate descriptions, whereas the base targets general multimodal understanding.

vs OpenAI GPT-4 (with vision): Qwen3 VL 32B Instruct is optimized specifically for instruction-following and visual content generation with fewer hallucinations on visual inputs; GPT-4 offers broader general AI capabilities but can be less specialized in visual instruction adherence.

vs Claude 4.5 Visual: Qwen3 VL 32B Instruct provides stronger image description and dialogue quality with a focus on visual instructions, while Claude often excels in text-based reasoning and larger context management but with slightly less visual specialization.

vs DeepSeek V3.1: Qwen3 VL 32B Instruct outperforms in detailed content generation and visualization tasks, whereas DeepSeek focuses more on semantic image search and retrieval functionality.